This repository was archived by the owner on Oct 23, 2024. It is now read-only.

Commit f63527a

[SPARK-21502][MESOS] fix --supervise for mesos in cluster mode

## What changes were proposed in this pull request?

With supervise enabled for a driver, re-launching it was failing because the driver had the same framework Id. This patch creates a new driver framework id every time we re-launch a driver, but we keep the driver submission id the same since that is the same with the task id the driver was launched with on mesos and retry state and other info within Dispatcher's data structures uses that as a key.

We append a "-retry-%4d" string as a suffix to the framework id passed by the dispatcher to the driver and the same value to the app_id created by each driver, except the first time where we dont need the retry suffix.

The previous format for the frameworkId was 'DispactherFId-DriverSubmissionId'.

We also detect the case where we have multiple spark contexts started from within the same driver and we do set proper names to their corresponding app-ids. The old practice was to unset the framework id passed from the dispatcher after the driver framework was started for the first time and let mesos decide the framework ID for subsequent spark contexts. The decided fId was passed as an appID.

This patch affects heavily the history server. Btw we dont have the issues of the standalone case where driver id must be different since the dispatcher will re-launch a driver(mesos task) only if it gets an update that it is dead and this is verified by mesos implicitly. We also dont fix the fine grained mode which is deprecated and of no use.

## How was this patch tested?

This task was manually tested on dc/os. Launched a driver, stoped its container and verified the expected behavior.

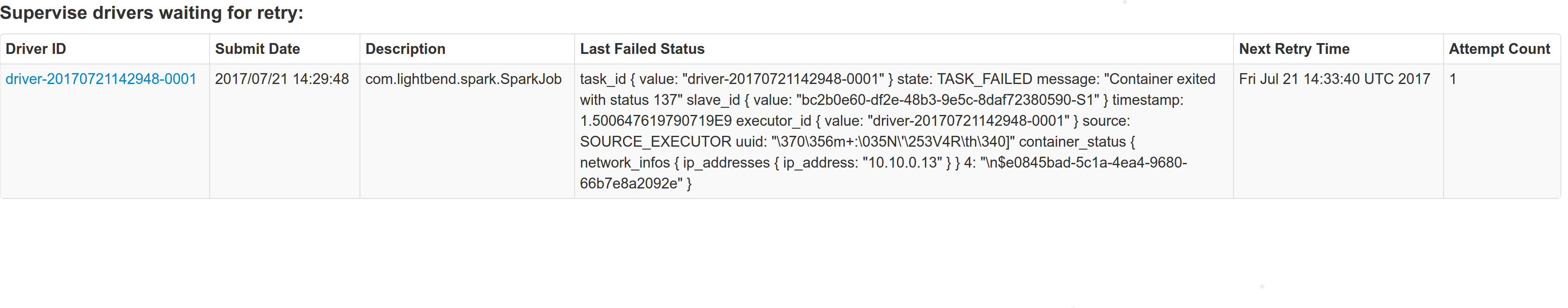

Initial retry of the driver, driver in pending state:

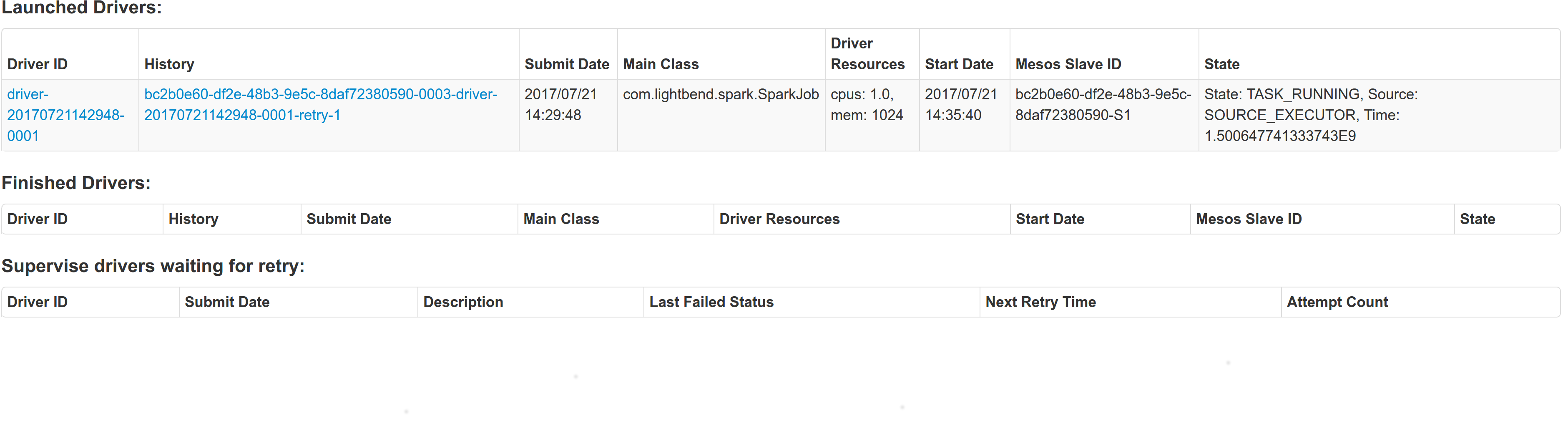

Driver re-launched:

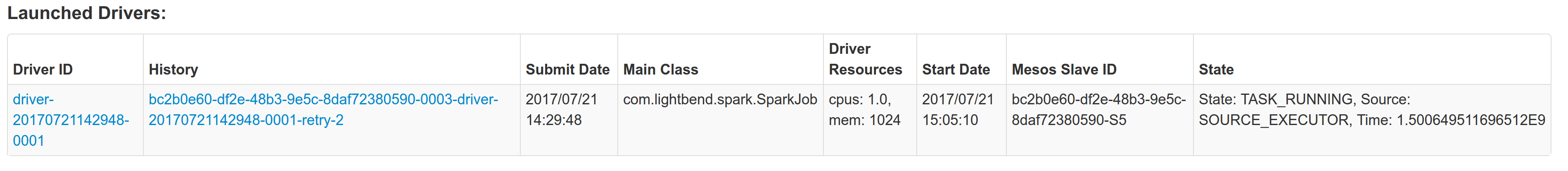

Another re-try:

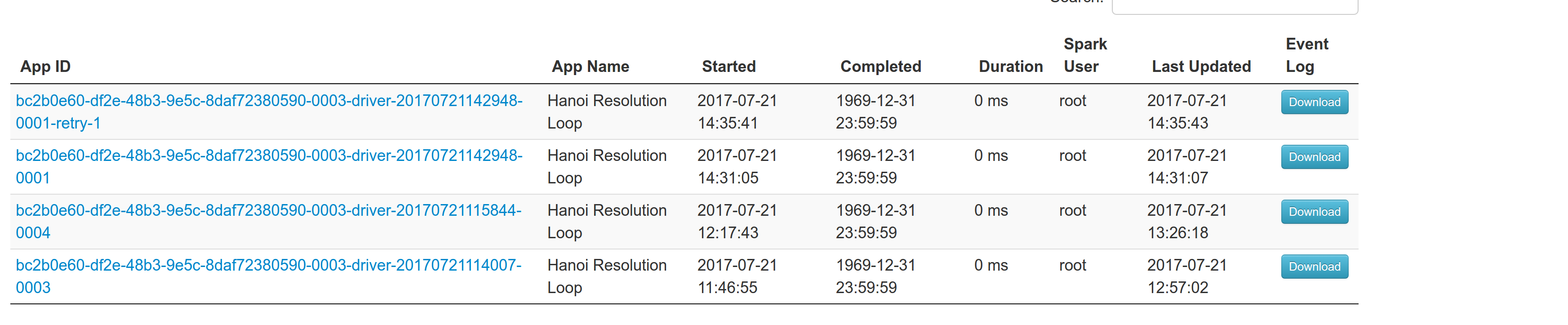

The resulted entries in history server at the bottom:

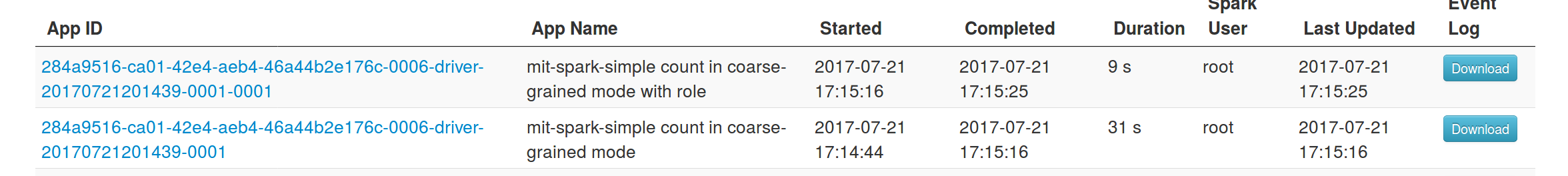

Regarding multiple spark contexts here is the end result regarding the spark history server, for the second spark context we add an increasing number as a suffix:

Author: Stavros Kontopoulos <st.kontopoulos@gmail.com>

Closes apache#18705 from skonto/fix_supervise_flag.1 parent e416704 commit f63527a

File tree

2 files changed

+20

-3

lines changed- resource-managers/mesos/src/main/scala/org/apache/spark/scheduler/cluster/mesos

2 files changed

+20

-3

lines changedLines changed: 2 additions & 1 deletion

| Original file line number | Diff line number | Diff line change | |

|---|---|---|---|

| |||

370 | 370 | | |

371 | 371 | | |

372 | 372 | | |

373 | | - | |

| 373 | + | |

| 374 | + | |

374 | 375 | | |

375 | 376 | | |

376 | 377 | | |

| |||

Lines changed: 18 additions & 2 deletions

| Original file line number | Diff line number | Diff line change | |

|---|---|---|---|

| |||

19 | 19 | | |

20 | 20 | | |

21 | 21 | | |

| 22 | + | |

22 | 23 | | |

23 | 24 | | |

24 | 25 | | |

| |||

168 | 169 | | |

169 | 170 | | |

170 | 171 | | |

| 172 | + | |

| 173 | + | |

| 174 | + | |

| 175 | + | |

| 176 | + | |

| 177 | + | |

| 178 | + | |

| 179 | + | |

| 180 | + | |

171 | 181 | | |

172 | 182 | | |

173 | 183 | | |

| |||

177 | 187 | | |

178 | 188 | | |

179 | 189 | | |

180 | | - | |

| 190 | + | |

181 | 191 | | |

182 | 192 | | |

183 | | - | |

184 | 193 | | |

185 | 194 | | |

186 | 195 | | |

| |||

269 | 278 | | |

270 | 279 | | |

271 | 280 | | |

| 281 | + | |

272 | 282 | | |

273 | 283 | | |

274 | 284 | | |

| |||

670 | 680 | | |

671 | 681 | | |

672 | 682 | | |

| 683 | + | |

| 684 | + | |

| 685 | + | |

| 686 | + | |

| 687 | + | |

| 688 | + | |

0 commit comments