+{"cells":[{"metadata":{},"cell_type":"markdown","source":" # <div style=\"text-align: center\"> Reducing Memory Size for IEEE </div> \n<div style=\"text-align:center\"> last update: <b> 17/07/2019</b></div>\n"},{"metadata":{},"cell_type":"markdown","source":"## Objective of the Kernel: Save time & memory\nIf you would like to create a kernel. this is a good idea to add this kernel as a data set to your own kernel. due to you can save your time and memory."},{"metadata":{},"cell_type":"markdown","source":"___MEMORY USAGE BEFORE AND AFTER COMPLETION FOR TRAIN:___\n<br/>\nMemory usage before running this script : 1975.3707885742188 MB\n<br/>\nMemory usage after running this script : ~ 480 MB\n<br/>\nThis is ~ 28 % of the initial size"},{"metadata":{},"cell_type":"markdown","source":"\n___MEMORY USAGE BEFORE AND AFTER COMPLETION FOR TEST:___\n<br/>\nMemory usage before running this script : 1693.867820739746 MB\n<br/>\nMemory usage after running this script: ~ 480 MB\n<br/>\nThis is ~ 28 % of the initial size"},{"metadata":{},"cell_type":"markdown","source":"## Import"},{"metadata":{"_uuid":"8f2839f25d086af736a60e9eeb907d3b93b6e0e5","_cell_guid":"b1076dfc-b9ad-4769-8c92-a6c4dae69d19","trusted":true},"cell_type":"code","source":"import numpy as np # linear algebra\nimport pandas as pd # data processing, CSV file I/O (e.g. pd.read_csv)\nimport os\nprint(os.listdir(\"../input\"))","execution_count":null,"outputs":[]},{"metadata":{},"cell_type":"markdown","source":"## Import Dataset to play with it"},{"metadata":{"_cell_guid":"79c7e3d0-c299-4dcb-8224-4455121ee9b0","_uuid":"d629ff2d2480ee46fbb7e2d37f6b5fab8052498a","trusted":true},"cell_type":"code","source":"# import Dataset to play with it\ntrain_identity= pd.read_csv(\"../input/train_identity.csv\", index_col='TransactionID')\ntrain_transaction= pd.read_csv(\"../input/train_transaction.csv\", index_col='TransactionID')\ntest_identity= pd.read_csv(\"../input/test_identity.csv\", index_col='TransactionID')\ntest_transaction = pd.read_csv('../input/test_transaction.csv', index_col='TransactionID')","execution_count":null,"outputs":[]},{"metadata":{},"cell_type":"markdown","source":"### Creat our train & test dataset"},{"metadata":{"trusted":true},"cell_type":"code","source":"# Creat our train & test dataset\ntrain = train_transaction.merge(train_identity, how='left', left_index=True, right_index=True)\ntest = test_transaction.merge(test_identity, how='left', left_index=True, right_index=True)","execution_count":null,"outputs":[]},{"metadata":{},"cell_type":"markdown","source":"### Berfore Reducing Memory\nWhen I have just read the data set and join them!I saw that the status of my RAM is more than 9GB!"},{"metadata":{},"cell_type":"markdown","source":""},{"metadata":{},"cell_type":"markdown","source":"Then we shoud just delete some dt!"},{"metadata":{"trusted":true},"cell_type":"code","source":"del train_identity,train_transaction,test_identity, test_transaction","execution_count":null,"outputs":[]},{"metadata":{},"cell_type":"markdown","source":"\n3GB of RAM has got free! now just check the size of our train & test"},{"metadata":{"trusted":true},"cell_type":"code","source":"train.info()","execution_count":null,"outputs":[]},{"metadata":{"trusted":true},"cell_type":"code","source":"test.info()","execution_count":null,"outputs":[]},{"metadata":{},"cell_type":"markdown","source":"# IEEE Reducing Memory Size"},{"metadata":{"trusted":true,"_kg_hide-output":true},"cell_type":"code","source":"#Based on this great kernel https://www.kaggle.com/arjanso/reducing-dataframe-memory-size-by-65\ndef reduce_mem_usage(df):\n start_mem_usg = df.memory_usage().sum() / 1024**2 \n print(\"Memory usage of properties dataframe is :\",start_mem_usg,\" MB\")\n NAlist = [] # Keeps track of columns that have missing values filled in. \n for col in df.columns:\n if df[col].dtype != object: # Exclude strings \n # Print current column type\n print(\"******************************\")\n print(\"Column: \",col)\n print(\"dtype before: \",df[col].dtype) \n # make variables for Int, max and min\n IsInt = False\n mx = df[col].max()\n mn = df[col].min() \n # Integer does not support NA, therefore, NA needs to be filled\n if not np.isfinite(df[col]).all(): \n NAlist.append(col)\n df[col].fillna(mn-1,inplace=True) \n \n # test if column can be converted to an integer\n asint = df[col].fillna(0).astype(np.int64)\n result = (df[col] - asint)\n result = result.sum()\n if result > -0.01 and result < 0.01:\n IsInt = True \n # Make Integer/unsigned Integer datatypes\n if IsInt:\n if mn >= 0:\n if mx < 255:\n df[col] = df[col].astype(np.uint8)\n elif mx < 65535:\n df[col] = df[col].astype(np.uint16)\n elif mx < 4294967295:\n df[col] = df[col].astype(np.uint32)\n else:\n df[col] = df[col].astype(np.uint64)\n else:\n if mn > np.iinfo(np.int8).min and mx < np.iinfo(np.int8).max:\n df[col] = df[col].astype(np.int8)\n elif mn > np.iinfo(np.int16).min and mx < np.iinfo(np.int16).max:\n df[col] = df[col].astype(np.int16)\n elif mn > np.iinfo(np.int32).min and mx < np.iinfo(np.int32).max:\n df[col] = df[col].astype(np.int32)\n elif mn > np.iinfo(np.int64).min and mx < np.iinfo(np.int64).max:\n df[col] = df[col].astype(np.int64) \n # Make float datatypes 32 bit\n else:\n df[col] = df[col].astype(np.float32)\n \n # Print new column type\n print(\"dtype after: \",df[col].dtype)\n print(\"******************************\")\n # Print final result\n print(\"___MEMORY USAGE AFTER COMPLETION:___\")\n mem_usg = df.memory_usage().sum() / 1024**2 \n print(\"Memory usage is: \",mem_usg,\" MB\")\n print(\"This is \",100*mem_usg/start_mem_usg,\"% of the initial size\")\n return df, NAlist","execution_count":null,"outputs":[]},{"metadata":{},"cell_type":"markdown","source":"Reducing for train data set:"},{"metadata":{"trusted":true,"_kg_hide-output":true},"cell_type":"code","source":"train, NAlist = reduce_mem_usage(train)\nprint(\"_________________\")\nprint(\"\")\nprint(\"Warning: the following columns have missing values filled with 'df['column_name'].min() -1': \")\nprint(\"_________________\")\nprint(\"\")\nprint(NAlist)","execution_count":null,"outputs":[]},{"metadata":{},"cell_type":"markdown","source":"Reducing for test data set:"},{"metadata":{"trusted":true,"_kg_hide-output":true},"cell_type":"code","source":"test, NAlist = reduce_mem_usage(test)\nprint(\"_________________\")\nprint(\"\")\nprint(\"Warning: the following columns have missing values filled with 'df['column_name'].min() -1': \")\nprint(\"_________________\")\nprint(\"\")\nprint(NAlist)","execution_count":null,"outputs":[]},{"metadata":{},"cell_type":"markdown","source":"check again! our RAM."},{"metadata":{},"cell_type":"markdown","source":""},{"metadata":{"trusted":true},"cell_type":"code","source":"train.info()","execution_count":null,"outputs":[]},{"metadata":{"trusted":true},"cell_type":"code","source":"test.info()","execution_count":null,"outputs":[]},{"metadata":{},"cell_type":"markdown","source":"## Add this kernel as Dataset\nNow we just save our output as csv files. then you can simply add them to your own kernel.you will save time and memory."},{"metadata":{"trusted":true},"cell_type":"code","source":"train.to_csv('train.csv', index=False)\ntest.to_csv('test.csv', index=False)","execution_count":null,"outputs":[]},{"metadata":{},"cell_type":"markdown","source":"## How about other ways!\nI have used this [great kernel](https://www.kaggle.com/arjanso/reducing-dataframe-memory-size-by-65) but there are other way too such as:\n1. https://www.dataquest.io/blog/pandas-big-data/\n2. [optimizing-the-size-of-a-pandas-dataframe-for-low-memory-environment](https://medium.com/@vincentteyssier/optimizing-the-size-of-a-pandas-dataframe-for-low-memory-environment-5f07db3d72e)\n3. [pandas-making-dataframe-smaller-faster](https://www.ritchieng.com/pandas-making-dataframe-smaller-faster/)"}],"metadata":{"kernelspec":{"display_name":"Python 3","language":"python","name":"python3"},"language_info":{"name":"python","version":"3.6.4","mimetype":"text/x-python","codemirror_mode":{"name":"ipython","version":3},"pygments_lexer":"ipython3","nbconvert_exporter":"python","file_extension":".py"}},"nbformat":4,"nbformat_minor":1}

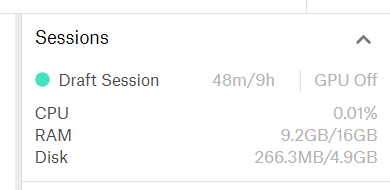

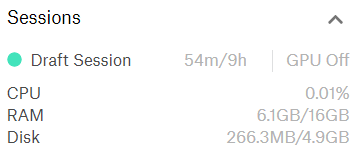

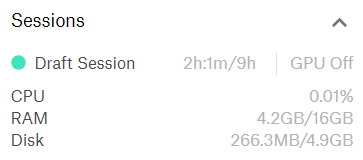

0 commit comments