Add this suggestion to a batch that can be applied as a single commit.

This suggestion is invalid because no changes were made to the code.

Suggestions cannot be applied while the pull request is closed.

Suggestions cannot be applied while viewing a subset of changes.

Only one suggestion per line can be applied in a batch.

Add this suggestion to a batch that can be applied as a single commit.

Applying suggestions on deleted lines is not supported.

You must change the existing code in this line in order to create a valid suggestion.

Outdated suggestions cannot be applied.

This suggestion has been applied or marked resolved.

Suggestions cannot be applied from pending reviews.

Suggestions cannot be applied on multi-line comments.

Suggestions cannot be applied while the pull request is queued to merge.

Suggestion cannot be applied right now. Please check back later.

Branch 2.3 #21726

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Uh oh!

There was an error while loading. Please reload this page.

Branch 2.3 #21726

Changes from 1 commit

70be6034e138209632c462b80571befb22d43f5e403737c3d1c81c0cdbb1b39ab01ba7320ffb14f6a457bb26bdbfd66a3ba5a8a86bd83f7b129fd45f2c0585d24d131bae444903960fa0bd776575bb19accb0a598360da044095cbc7a0deaa1ee6f11d78f033e7269e373ac6423ba441a0d7949992447f285b841578607b1f180cd6eee54530242b6fe9cb4adfa4379a4eb1e42aa66eb56cfbd98fe20e1f12fa1326a8a67c8aa6fb88dd335232b9f84550673911b83db9ea2e866c19788cd6a96ee6e79786ca9151dd37ff404f7e28ff8e16bc5ce043ec25d5b083bd15bd306c265e61ea8e357a33ba8dbf3efbfa0663b61a9d078472c13ed2e1e27452a52d599f5c0bd9e1f7021b6de4693757180e794392049395c1c03d2f82c03c854b6c0b880db1e552b34b9f33fc9acd464da8c221d0d0a545761cece0fbecea44783523fcaf57026a12fd7aca328dea61c39dfa38c0bd70bfbcaf51630451365d733f5955a507cff2f1f10da6ca6483ce15651f36bdb428c9adba81e2030b7b8ccf93667fccc4a201a537a2bf1dabe0f2aabc320269eacfc15603a4dfd5712695908c6812995b79dfdf1bbd4f204c9857e24564019b6b99d5ba1c56b65bcb7bd130641132bec6c7fb1117fb96821be184d19b562d68eb64a5d914100c2f4ee78eb9a411c3e820096defd07ec75c4a10df0df45ddb235ec9e52a420f682f05d88abf7bb3adb530fe53b610e2f1fbfe50b661e7bc08509284d35eb2f3f78f60f87785a3a22fea4dc6719aba52f48889d78eab10f9323dc3a16cd9ac4c49b12414e4e31d598b77de4bef867d94888003f02f60df0a8ee5706dfb515cc93bc9eb7b373a886dc2e16ab6fd4a892a1708de228973e1895c95ed88f3e470b866593258d8efe183fed0060cded67093d2ae0b75e2cd1068c4aef48d624d0f30e354aeae7a06fc459b0f6f58bb6c22a9700cbfec43fe49a6c2b66289a3e1c0ab13a024a4dc24da2b37e76fe56266a1cc5f682e0c346e4e96f930aaa5a21800b8181945436f1d5e15829454d4548abf5868763e1da1a55de38470cacda2f65ebe6bf3257f1708ad3255a5a7d378ed4261049d63e54b8dbfcc50cdb41d687d978928de33a4b6f3a1e9640db538b26e1f5e00f534d38ff4b973c0af791cba050bc7ee75e5cc5f664c72b44df06b472eb97c19542f5307499e86457a132429259cf375fb3726daf9a2b0adae352ae31b4767be70e2File filter

Filter by extension

Conversations

Uh oh!

There was an error while loading. Please reload this page.

Jump to

Uh oh!

There was an error while loading. Please reload this page.

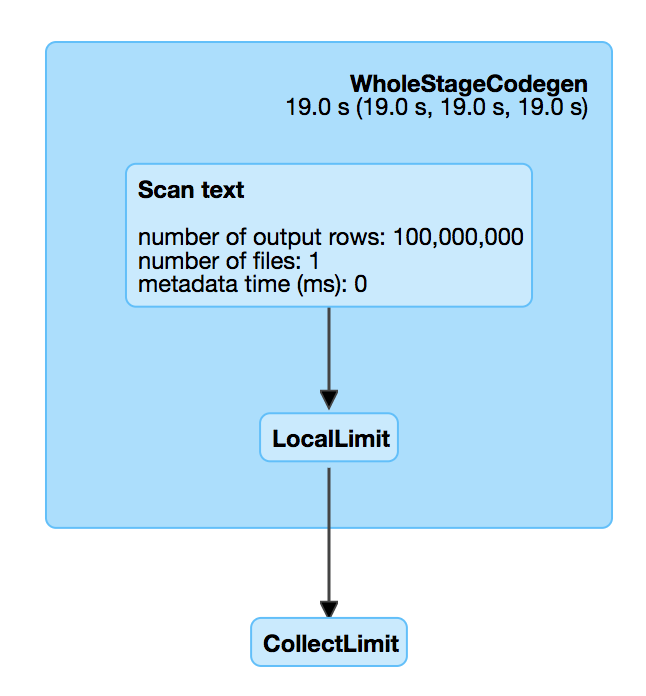

## What changes were proposed in this pull request? There is a performance regression in Spark 2.3. When we read a big compressed text file which is un-splittable(e.g. gz), and then take the first record, Spark will scan all the data in the text file which is very slow. For example, `spark.read.text("/tmp/test.csv.gz").head(1)`, we can check out the SQL UI and see that the file is fully scanned.  This is introduced by #18955 , which adds a LocalLimit to the query when executing `Dataset.head`. The foundamental problem is, `Limit` is not well whole-stage-codegened. It keeps consuming the input even if we have already hit the limitation. However, if we just fix LIMIT whole-stage-codegen, the memory leak test will fail, as we don't fully consume the inputs to trigger the resource cleanup. To fix it completely, we should do the following 1. fix LIMIT whole-stage-codegen, stop consuming inputs after hitting the limitation. 2. in whole-stage-codegen, provide a way to release resource of the parant operator, and apply it in LIMIT 3. automatically release resource when task ends. Howere this is a non-trivial change, and is risky to backport to Spark 2.3. This PR proposes to revert #18955 in Spark 2.3. The memory leak is not a big issue. When task ends, Spark will release all the pages allocated by this task, which is kind of releasing most of the resources. I'll submit a exhaustive fix to master later. ## How was this patch tested? N/A Author: Wenchen Fan <[email protected]> Closes #21573 from cloud-fan/limit.Uh oh!

There was an error while loading. Please reload this page.

There are no files selected for viewing